- Published 18 March 2026

WHY AI PILOTS DIE BETWEEN THE DEMO AND PRODUCTION—AND WHAT'S ACTUALLY BEHIND IT!

Every enterprise I speak to today has a version of the same story. They ran a pilot, it worked beautifully in a controlled environment, leadership was impressed, and then somewhere between the boardroom and the server room, it quietly stopped. Not with a bang. Just a slow fade into a slide deck that gets referenced occasionally and actioned never.

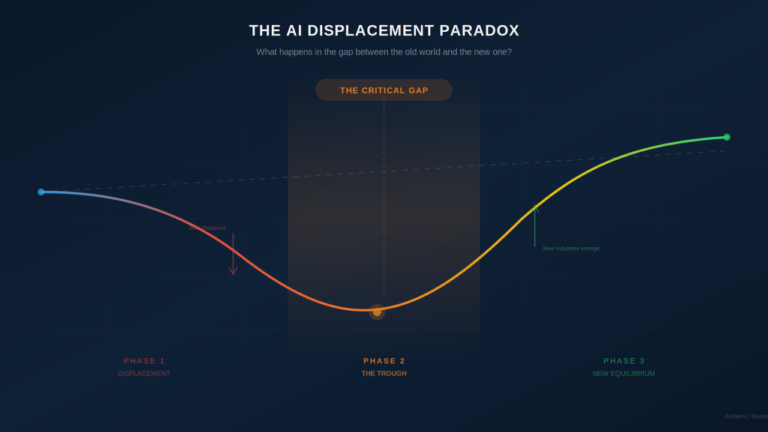

The scale of this moment is hard to ignore. According to Lenovo’s CIO Playbook 2026, developed with IDC research across 3,120 decision makers globally, only half of all AI proof of concepts have now progressed into production, a dramatic shift from a year earlier, when IDC’s 2025 Playbook found that 88% of proof of concepts failed to reach production. Organizations are projecting returns of $2.79 for every dollar invested in AI, and 96% plan to increase AI spending over the next twelve months. By every surface metric, the enterprise AI story looks like it is working.

The industry has gotten comfortable calling this the “pilot problem” as if the pilot itself was flawed. But in most cases the pilot was fine. The model performed, the use case was validated, the team was capable. What broke was everything that came after, and that’s a different problem altogether.

The real issue is that most enterprise AI delivery is built on a consulting model that was designed for a slower era and has never been fundamentally rethought. In that model, every new engagement starts from zero. A team comes in, spends weeks rebuilding context, relearning your architecture, your compliance requirements, your integration patterns, the institutional decisions that have been made over years. You pay for their education. And when the engagement ends, that knowledge walks out the door with them. The next team comes in and the cycle repeats. This is what we have started calling the Learning Tax, and enterprises have been paying it for years without quite naming it.

When timelines were measured in quarters, this inefficiency was expensive but survivable. AI compresses those timelines to weeks. Suddenly the learning tax is not just a cost problem, it’s a competitive liability.

And this is precisely where pilots go to die. They succeed in isolation, inside a controlled engagement with a motivated team and a clear sponsor, and then they collide with enterprise reality: security, compliance, scale, existing integrations, change management, none of which were fully embedded in the pilot’s architecture. The prototype that impressed in the boardroom was never designed to survive production. Not because the technology wasn’t good enough, but because the delivery model was never built to carry knowledge, governance, and context forward from one phase to the next.

The Governance Gap Nobody Names

The numbers make the structural problem concrete. The Lenovo CIO Playbook 2026 reveals a striking disconnect: while 60% of organizations consider themselves in late-stage AI adoption, only 27% have a comprehensive governance framework in place. 39% say they need more than twelve months before they will be ready to scale agentic AI, with only 10% considering themselves ready now.

Governance is not a checkbox. It is the infrastructure that lets AI operate at scale without regulatory exposure or eroded trust. When pilots collide with production, it is almost always this gap, not the model, not the data science, that stops them.

BCG’s 10-20-70 framework captures why this is so hard to fix: companies that capture the most value from AI allocate 10% of their effort to algorithms, 20% to technology and data, and 70% to transforming people, processes, and ways of working. Yet most organizations invest the overwhelming majority of their effort in the 30%. The structural work, governance, change management, ownership clarity, is exactly what the traditional delivery model is worst at preserving across engagements.

Rethinking AI Delivery: Compounding Build

Compounding Build came directly from confronting that gap; and refusing to look away from what it revealed. We had to question our own model before the market did it for us. The traditional consulting structure, including ours, had the same structural flaw: it treated knowledge as a byproduct of delivery rather than as the asset being built. Expertise accumulated in people’s heads and PowerPoint decks, not in systems. When people moved on, so did everything they knew.

Compounding Build inverts that logic entirely. Every engagement encodes knowledge, architectural decisions, compliance patterns, governance rules, integration standards, into persistent, executable systems. The next initiative doesn’t start from a blank slate; it starts from a foundation that already holds the institutional intelligence of everything that came before. The delivery gets faster. The risk reduces. The cost of change decreases over time. Not as a promise, but as a structural property of how the system is built.

The difference this makes to the pilot-to-production journey is significant. When governance is embedded from day one rather than retrofitted after the fact, the path to production doesn’t hit a compliance wall. When architectural patterns are reusable rather than reinvented, integration doesn’t become a six-month detour. When the system is designed for how the enterprise actually operates, not just for how the demo looked, production becomes the natural conclusion of the pilot, not its graveyard.

Conclusion

Both Sides Have to Think Differently

I’ll be honest about something. This shift is harder than it sounds, not technically but organisationally. It requires a consulting partner willing to build systems that make future engagements cheaper, even when the incentive structure of the traditional model rewards the opposite. It requires enterprises willing to think of AI delivery as a compounding capability they are building, not a series of disconnected projects they are procuring. Both sides have to think differently.

But the enterprises that are getting AI into production, genuinely into production and not just extended pilots with a production label, are the ones who have made this shift. They have stopped treating each initiative as its own universe and started treating their AI capability as something that should get stronger with every investment they make in it.